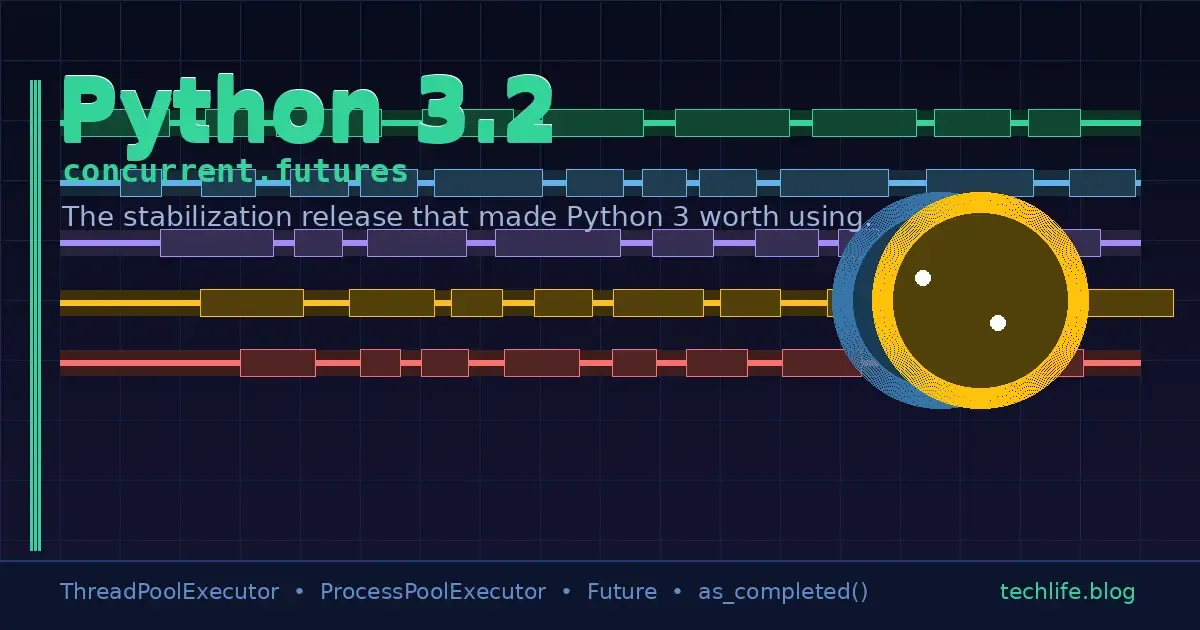

Python 3.2 and concurrent.futures: The Release That Made Python 3 Worth Using

- Turker Senturk

- Software

- 25 Mar, 2026

- 14 min read

Let’s be honest about something: Python 3.0 was kind of a disaster. Not a catastrophic, “burn it all down” disaster — more like the kind of disaster where you show up to a party with great intentions, spill wine on the host’s carpet in the first five minutes, and spend the rest of the evening apologizing. Python 3.0 launched in December 2008, broke backward compatibility with half the known universe, and left developers staring at their screens wondering why print "hello" suddenly threw a syntax error.

Python 3.1 was better. But not enough better.

Then came Python 3.2 in February 2011 — and that’s where things actually got interesting.

What Python 3.2 Actually Was: A Redemption Arc

Python 3.2 is the release that doesn’t get nearly enough credit. While it wasn’t the flashiest version — it didn’t ship with a revolutionary new syntax or a paradigm-shifting feature — it was the version that made Python 3 usable for real-world projects. Think of it as the “director’s cut” of Python 3: same core ideas, but polished, debugged, and finally ready for primetime.

The Python core team effectively declared 3.2 a stabilization release, which in plain English means: we’re fixing everything we broke in 3.0 and 3.1, and we’re adding some genuinely useful things while we’re at it.

Among those “genuinely useful things” was a module that deserves far more love than it typically gets: concurrent.futures.

But before we dive deep into that, let’s set the scene.

The State of Concurrency Before Python 3.2

If you were writing concurrent code in Python before 3.2, you had two main options, both of which required you to basically fight the language:

Option 1: The threading module. It worked, sort of. But you were managing Thread objects manually, calling .start() and .join() yourself, dealing with Queue objects to pass results around, and generally writing far more boilerplate than any reasonable human should have to write for “run this function five times at once.”

# The old way — threading module, pre-3.2

import threading

results = []

lock = threading.Lock()

def fetch_data(url):

# imagine this does something useful

result = f"data from {url}"

with lock:

results.append(result)

urls = ["http://example.com/1", "http://example.com/2", "http://example.com/3"]

threads = []

for url in urls:

t = threading.Thread(target=fetch_data, args=(url,))

threads.append(t)

t.start()

for t in threads:

t.join()

print(results)This works. But look at all that ceremony. You’re managing thread lifecycle, synchronizing access to a shared list, manually joining threads… for what is conceptually a very simple operation: map this function over these inputs, collect the results.

Option 2: The multiprocessing module. Introduced in Python 2.6, this gave you true parallelism by spawning separate processes instead of threads (bypassing Python’s infamous GIL). But the API was even more verbose, and getting results back from worker processes required jumping through hoops involving Pool, map, and apply_async.

# multiprocessing.Pool — better, but still awkward for mixed workloads

from multiprocessing import Pool

def square(n):

return n * n

if __name__ == "__main__":

with Pool(4) as p:

results = p.map(square, range(10))

print(results)That if __name__ == "__main__" guard isn’t just a best practice — on Windows, it’s mandatory or your script spawns worker processes that immediately try to spawn more worker processes, recursively, until your machine cries.

The fundamental problem was that threading and multiprocessing felt like completely different tools with different APIs, different mental models, and different trade-offs. If you wanted to switch from threading to multiprocessing (or vice versa), you weren’t just flipping a flag — you were essentially rewriting your concurrency code.

Enter concurrent.futures: The Unified Concurrency API

concurrent.futures arrived in Python 3.2 courtesy of PEP 3148, authored by Brian Quinlan. The module’s design philosophy can be summarized in one sentence:

You shouldn’t need to think about threads vs. processes. You should just think about work.

The module introduces two key abstractions:

Executor— the abstract base for “something that runs stuff”Future— an object representing the result of work that will complete at some point

From these two abstractions, you get two concrete executors:

ThreadPoolExecutor— runs callables in a pool of threads (best for I/O-bound work)ProcessPoolExecutor— runs callables in a pool of processes (best for CPU-bound work)

The genius is that both share exactly the same API. Switching between them is a one-word change.

ThreadPoolExecutor: Your I/O-Bound Best Friend

Let’s rewrite that threading example from before using concurrent.futures:

from concurrent.futures import ThreadPoolExecutor

def fetch_data(url):

# imagine this does something useful, like an HTTP request

return f"data from {url}"

urls = ["http://example.com/1", "http://example.com/2", "http://example.com/3"]

with ThreadPoolExecutor(max_workers=3) as executor:

results = list(executor.map(fetch_data, urls))

print(results)

# ['data from http://example.com/1', 'data from http://example.com/2', 'data from http://example.com/3']That’s it. No manual thread management. No locks. No join(). The with statement handles executor shutdown automatically, and executor.map() preserves input order in the output — something the old threading approach didn’t even attempt to do elegantly.

Using submit() for More Control

executor.map() is great for simple fan-out patterns, but sometimes you want more control. That’s what submit() is for:

from concurrent.futures import ThreadPoolExecutor, as_completed

def process_file(filename):

# Simulate some I/O-heavy work

import time, random

time.sleep(random.uniform(0.1, 0.5))

return f"processed: {filename}"

filenames = [f"file_{i}.txt" for i in range(6)]

with ThreadPoolExecutor(max_workers=3) as executor:

# Submit all tasks and get Future objects back

future_to_file = {

executor.submit(process_file, fname): fname

for fname in filenames

}

# Process results as they complete (not in submission order!)

for future in as_completed(future_to_file):

original_file = future_to_file[future]

try:

result = future.result()

print(f"✓ {result}")

except Exception as e:

print(f"✗ {original_file} failed: {e}")Notice as_completed() — another gem from the module. It yields futures in completion order, not submission order, which means you start processing results the moment they’re ready instead of waiting for the whole batch.

ProcessPoolExecutor: Breaking Free from the GIL

Here’s Python’s dirty secret: the GIL (Global Interpreter Lock) means that only one thread can execute Python bytecode at a time. Threads are great for I/O-bound work (while one thread waits for a network response, another can run), but for CPU-bound work — image processing, number crunching, parsing huge files — threads don’t actually parallelize. They take turns.

ProcessPoolExecutor sidesteps the GIL entirely by running work in separate processes. Each process has its own Python interpreter and its own GIL, so they genuinely run in parallel on multiple CPU cores.

And here’s the beautiful part — the API is identical:

from concurrent.futures import ProcessPoolExecutor

import math

def compute_heavy(n):

"""Simulate CPU-intensive work."""

# Compute the sum of square roots for a range of numbers

return sum(math.sqrt(i) for i in range(n))

inputs = [5_000_000, 3_000_000, 7_000_000, 4_000_000]

# Thread version (won't truly parallelize due to GIL):

# with ThreadPoolExecutor(max_workers=4) as executor:

# Process version (true parallelism):

if __name__ == "__main__":

with ProcessPoolExecutor(max_workers=4) as executor:

results = list(executor.map(compute_heavy, inputs))

print(results)Swap ProcessPoolExecutor for ThreadPoolExecutor (or vice versa), and everything else stays the same. That’s the whole design philosophy right there.

Understanding Futures: The Real Power

A Future object is the core concept underlying everything in concurrent.futures. It represents a computation that’s either pending, running, or done. Once done, it holds either a result or an exception.

from concurrent.futures import ThreadPoolExecutor

import time

def slow_operation(seconds, label):

time.sleep(seconds)

return f"Done: {label} (after {seconds}s)"

with ThreadPoolExecutor(max_workers=2) as executor:

future_a = executor.submit(slow_operation, 2, "Task A")

future_b = executor.submit(slow_operation, 1, "Task B")

print(f"future_a running? {future_a.running()}")

print(f"future_b done? {future_b.done()}")

# Block until result is ready

result_b = future_b.result(timeout=5) # 5-second timeout

print(result_b) # Prints after ~1 second

result_a = future_a.result()

print(result_a) # Prints after ~2 seconds totalKey Future methods:

.result(timeout=None)— blocks until the result is available, then returns it.exception()— returns the exception if the callable raised one.done()— returnsTrueif the future is finished (either result or exception).running()— returnsTrueif currently executing.cancel()— attempts to cancel (only works if not yet started).add_done_callback(fn)— registers a callback to be called when the future completes

Exception Handling: No More Silent Failures

One of the nicest things about concurrent.futures is how it handles exceptions. With raw threads, an exception raised inside a Thread target would just… disappear, silently, unless you explicitly caught it. With Future, exceptions are captured and re-raised when you call .result():

from concurrent.futures import ThreadPoolExecutor

def might_fail(n):

if n == 3:

raise ValueError(f"I refuse to process {n}")

return n * n

with ThreadPoolExecutor(max_workers=2) as executor:

futures = [executor.submit(might_fail, i) for i in range(5)]

for i, future in enumerate(futures):

try:

print(f"Result {i}: {future.result()}")

except ValueError as e:

print(f"Caught exception for task {i}: {e}")Output:

Result 0: 0

Result 1: 1

Result 2: 4

Caught exception for task 3: I refuse to process 3

Result 4: 16The exception is preserved on the Future object and only raised when you call .result(). Your main thread doesn’t crash. You handle errors exactly where and when you want to.

Real-World Pattern: Parallel HTTP Requests

Here’s a pattern you’ll actually use in real projects — fetching multiple URLs concurrently:

from concurrent.futures import ThreadPoolExecutor, as_completed

import urllib.request

import time

def fetch_url(url):

"""Fetch a URL and return (url, status_code, elapsed_ms)."""

start = time.time()

try:

with urllib.request.urlopen(url, timeout=10) as response:

elapsed = (time.time() - start) * 1000

return url, response.status, int(elapsed)

except Exception as e:

elapsed = (time.time() - start) * 1000

return url, None, int(elapsed)

urls = [

"https://httpbin.org/delay/1",

"https://httpbin.org/status/200",

"https://httpbin.org/status/404",

"https://httpbin.org/json",

]

print("Fetching URLs concurrently...\n")

start_total = time.time()

with ThreadPoolExecutor(max_workers=4) as executor:

future_to_url = {executor.submit(fetch_url, url): url for url in urls}

for future in as_completed(future_to_url):

url, status, ms = future.result()

status_display = str(status) if status else "ERROR"

print(f" [{status_display}] {url} — {ms}ms")

total_ms = int((time.time() - start_total) * 1000)

print(f"\nTotal time: {total_ms}ms (vs ~{len(urls) * 1000}ms sequential)")Without concurrency, four requests that each take ~1 second would take ~4 seconds total. With ThreadPoolExecutor, they run in parallel and finish in roughly the time of the slowest request — typically under 1.5 seconds for this batch.

Real-World Pattern: CPU-Bound Data Processing

Now let’s flip to the CPU-bound side. Say you have a list of large datasets and need to apply an expensive transformation to each one:

from concurrent.futures import ProcessPoolExecutor

import math

def analyze_dataset(data):

"""Simulate an expensive statistical computation."""

n = len(data)

mean = sum(data) / n

variance = sum((x - mean) ** 2 for x in data) / n

std_dev = math.sqrt(variance)

# Simulate more complex work

percentiles = sorted(data)[::n // 100 or 1]

return {

"mean": round(mean, 4),

"std_dev": round(std_dev, 4),

"min": min(data),

"max": max(data),

"sample_percentiles": percentiles[:5],

}

# Generate some fake datasets

import random

datasets = [

[random.gauss(100, 15) for _ in range(100_000)]

for _ in range(8)

]

if __name__ == "__main__":

with ProcessPoolExecutor(max_workers=4) as executor:

analyses = list(executor.map(analyze_dataset, datasets))

for i, stats in enumerate(analyses):

print(f"Dataset {i}: mean={stats['mean']}, std={stats['std_dev']}")On a quad-core machine, this processes 8 datasets in roughly the time it would take to process 2 sequentially — genuine parallelism, not the cooperative-multitasking theater that threading gives you for CPU work.

The wait() Function: Batch Coordination

Sometimes you need to wait for a specific set of futures to complete before proceeding. wait() lets you do exactly that:

from concurrent.futures import ThreadPoolExecutor, wait, FIRST_COMPLETED, ALL_COMPLETED

import time, random

def worker(task_id):

duration = random.uniform(0.5, 2.0)

time.sleep(duration)

return f"Task {task_id} complete ({duration:.2f}s)"

with ThreadPoolExecutor(max_workers=5) as executor:

futures = [executor.submit(worker, i) for i in range(5)]

# Wait until at least ONE future is done

done, not_done = wait(futures, return_when=FIRST_COMPLETED)

print(f"First to finish:")

for f in done:

print(f" → {f.result()}")

print(f"\nStill running: {len(not_done)} tasks")

# Now wait for ALL remaining

done_all, _ = wait(not_done, return_when=ALL_COMPLETED)

print(f"\nAll done:")

for f in done_all:

print(f" → {f.result()}")return_when accepts three constants: FIRST_COMPLETED, FIRST_EXCEPTION (stops at the first exception), and ALL_COMPLETED.

Why This Was Revolutionary for Python 3.2

Here’s the thing about concurrent.futures that often gets lost: it wasn’t just a convenient API. It was a statement of intent from the Python core team about how they thought developers should interact with concurrency.

Before this module, Python’s concurrency story was fragmented. You had threading for one use case, multiprocessing for another, and a bunch of third-party libraries (eventlet, gevent, Twisted) filling gaps that the standard library refused to address. Every project had its own concurrency flavor.

concurrent.futures gave Python developers a lingua franca for concurrent programming — a shared vocabulary and pattern that worked across use cases. It also laid the conceptual groundwork for asyncio, which arrived in Python 3.4 and brought native async/await support. The Future concept from concurrent.futures directly informed the asyncio.Future class design.

Python 3.2’s Other Contributions

While concurrent.futures is the headliner, Python 3.2 shipped with several other notable improvements:

argparsejoined the standard library (replacing the agingoptparse)sslmodule improvements — better certificate verification and TLS supportfunctools.lru_cache— the beloved memoization decorator that every Python developer now uses instinctivelyos.stat_resultgained nanosecond precision timestampsreprlibwas reorganized and improved- The

iostack was rewritten in C, making file I/O significantly faster pycfiles moved to a__pycache__directory — finally, no more.pycfiles cluttering your source directories

The functools.lru_cache deserves special mention. It arrived quietly in 3.2 and has since become one of the most useful decorators in the entire standard library:

from functools import lru_cache

@lru_cache(maxsize=128)

def fibonacci(n):

if n < 2:

return n

return fibonacci(n - 1) + fibonacci(n - 2)

# Without cache: 2^50 recursive calls

# With cache: 50 unique calls, everything else is a lookup

print(fibonacci(50)) # 12586269025 — instantQuick Reference: concurrent.futures Cheat Sheet

| Feature | ThreadPoolExecutor | ProcessPoolExecutor |

|---|---|---|

| Best for | I/O-bound work | CPU-bound work |

| GIL bypass | No | Yes |

| Shared memory | Yes (with care) | No (separate processes) |

| Startup overhead | Low | Higher (process spawn) |

Windows __main__ guard | Not required | Required |

| Data serialization | Not needed | Pickle (objects must be serializable) |

| Max workers default | min(32, os.cpu_count() + 4) | os.cpu_count() |

| Method / Function | Description |

|---|---|

executor.submit(fn, *args) | Submit a single callable, returns Future |

executor.map(fn, iterable) | Map function over iterable, returns iterator of results |

executor.shutdown(wait=True) | Shut down executor and free resources |

future.result(timeout=None) | Get result (blocks until ready) |

future.exception() | Get exception if raised |

future.done() | Check if finished |

future.cancel() | Attempt cancellation |

future.add_done_callback(fn) | Register completion callback |

as_completed(futures) | Yield futures as they complete |

wait(futures, return_when=...) | Wait for futures with control over when to return |

The Verdict

Python 3.2 was never going to win any “most exciting release” awards. It didn’t introduce a shiny new syntax. It didn’t make headlines the way Python 3.0’s controversial compatibility breaks did. But it did something arguably more important: it made Python 3 worth using.

concurrent.futures in particular gave Python a concurrency story that was finally approachable — a high-level API that let you write parallel code without needing a computer science degree in lock-free data structures. It bridged the gap between threading and multiprocessing with a unified interface, handled exception propagation gracefully, and introduced the Future pattern that would become foundational for Python’s async ecosystem.

If you’re writing concurrent Python code today and you’re not using concurrent.futures, you’re probably making things harder than they need to be. Start with ThreadPoolExecutor for I/O work, reach for ProcessPoolExecutor when the CPU is your bottleneck, and let the executor take care of the rest.

Python 3.2 did the boring work. And sometimes, boring is exactly what you need.

Sources:

Share :

Stay Ahead in Tech

Join thousands of developers and tech enthusiasts. Get our top stories delivered safely to your inbox every week.

No spam. Unsubscribe at any time.