Cleaning the Slate: The Radical Engineering Behind Python 3.0

- Turker Senturk

- Software

- 19 Mar, 2026

- 8 min read

In the software world, backward compatibility is practically sacred. Libraries, frameworks, entire companies are built on the assumption that updating a language won’t torch everything you’ve already written. So when Guido van Rossum and the Python core team announced that Python 3 would deliberately break compatibility with Python 2, the developer community had exactly the reaction you’d expect: mild panic, spirited blog posts, and a migration phase that dragged on for over a decade.

But here’s the thing — they were right. Python 3 wasn’t a rebellion for rebellion’s sake. It was a carefully considered reset, a chance to fix the kind of design mistakes that only become obvious after millions of people have been quietly suffering through them for years. Think of it like a city deciding to switch from driving on the left side of the road to the right. Painful, chaotic, and totally necessary.

Let’s break down the four biggest changes that made Python 3 so different from its predecessor — and why each one actually mattered.

1. Unicode by Default: The End of the Character Encoding Nightmare

If you’ve never lost an afternoon to a UnicodeDecodeError, count yourself lucky. In Python 2, the default str type was essentially a bag of bytes — not text, bytes. It worked fine as long as you only ever dealt with plain ASCII characters (basically, English letters and numbers). The moment you tried to handle Turkish characters like ğ, ş, or ı — or Japanese, Arabic, Cyrillic, anything outside that narrow Latin alphabet — you were in trouble.

Python 2 did have a unicode type for proper text handling, and you could invoke it by writing u"Merhaba Dünya" with a special prefix. But this was opt-in. Most developers forgot, or didn’t know, or didn’t care until their app crashed spectacularly in production when a user typed their name in Korean.

Python 3 fixed this at the root. Every string (str) is now Unicode by default. There’s no u"" prefix needed, no mental overhead of tracking which variables are “real text” and which are “byte soup.” If you want raw bytes, you explicitly ask for them with b"...". The default is now the smart choice.

The practical impact of this change is enormous. It’s why Python became the go-to language for data science, international applications, and NLP work — the language stopped treating non-English text as a second-class citizen.

| Feature | Python 2 | Python 3 |

|---|---|---|

Default str type | Byte sequence | Unicode text |

| Non-ASCII support | Opt-in (u"...") | Built-in |

| Encoding errors | Common and confusing | Much rarer |

| Byte handling | Implicit | Explicit (b"...") |

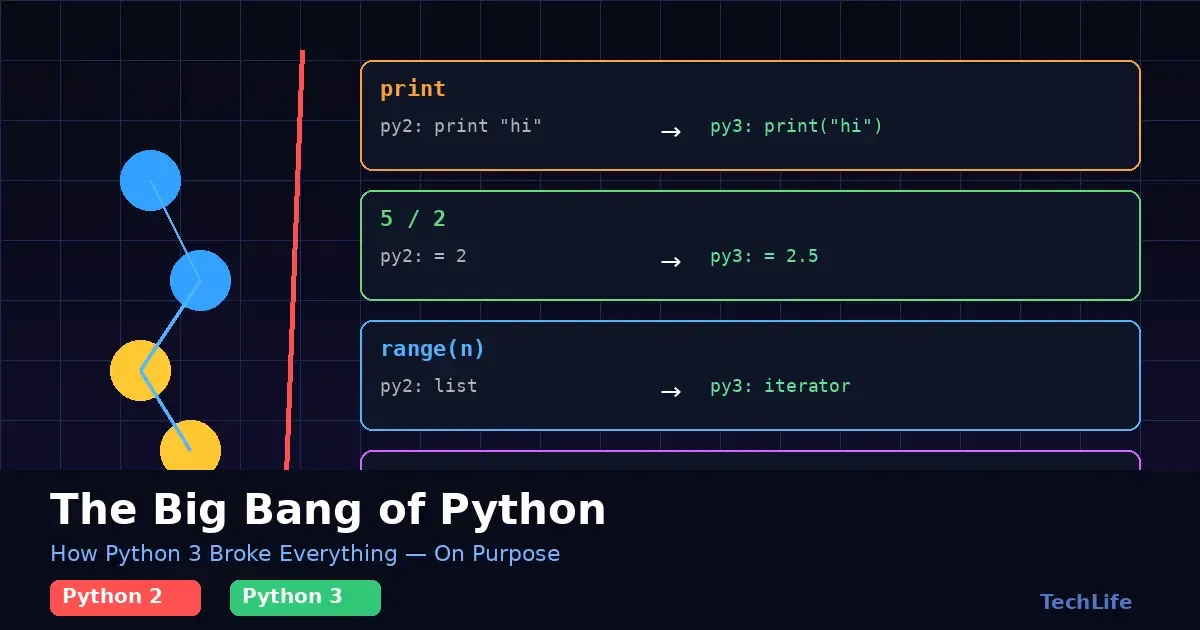

2. print Becomes a Function: Small Change, Huge Implications

This is the one that made Python 2 developers groan the loudest, mostly because it broke every single script they’d ever written in the most visible way possible. In Python 2, print was a statement — a special syntactic keyword built into the language, like if or for.

# Python 2 — works fine

print "Hello, World"

# Python 3 — SyntaxError

print "Hello, World"In Python 3, print became a regular function — one that you call with parentheses, like every other function in the language.

# Python 3

print("Hello, World")To a beginner, this looks like a trivial cosmetic change. It’s not. Making print a real function unlocked a range of capabilities that the old statement syntax simply couldn’t support:

sepparameter: Control what separator goes between multiple items.print("a", "b", "c", sep="-")printsa-b-c.endparameter: Control what character terminates the line. Default is\n, but you can change it.fileparameter: Redirect output to a file or any other stream, not just stdout.- First-class object: You can now pass

printas an argument to another function.map(print, my_list)just works.

It seems small. But this change is part of a broader Python 3 philosophy: there should be one obvious way to do things, and special cases should be avoided. print being a statement was a special case. Now it’s not.

3. Integer Division Gets Fixed: 5 / 2 Finally Equals 2.5

This one caused so many subtle bugs that entire debugging sessions were lost to it. In Python 2, dividing two integers with the / operator performed integer division — meaning it would truncate the decimal portion and return a whole number.

# Python 2

5 / 2 # Returns 2 — not 2.5

7 / 3 # Returns 2 — not 2.333...This behavior made sense in a low-level systems context, where integer arithmetic is fast and explicit. But for the average Python script — data analysis, financial calculations, scientific computing — it was a landmine. You’d do perfectly correct-looking arithmetic and silently get wrong answers.

Python 3 changed / to always return a float:

# Python 3

5 / 2 # Returns 2.5

7 / 3 # Returns 2.333...And for the cases where you genuinely want floor division (rounding down to the nearest integer), Python 3 introduced the // operator:

# Python 3 — explicit floor division

5 // 2 # Returns 2

7 // 3 # Returns 2The beauty of this fix is that it makes intent explicit. If you write 5 / 2, you want 2.5. If you write 5 // 2, you want 2. No surprises, no silent truncation. Python became a language where the obvious-looking code actually does the obvious thing — which is, frankly, the whole point.

| Expression | Python 2 Result | Python 3 Result |

|---|---|---|

5 / 2 | 2 (integer!) | 2.5 (float) |

7 / 3 | 2 (integer!) | 2.333... (float) |

5 // 2 | 2 | 2 |

7 // 3 | 2 | 2 |

4. Views and Iterators: Doing More With Less Memory

This is arguably the most technically significant change of the bunch, even though it’s the least visible in day-to-day coding. In Python 2, functions like range(), zip(), map(), and dictionary methods like .keys() and .values() all returned lists — full, materialized, in-memory lists.

This was fine when you were working with small data. But if you wrote something like:

# Python 2

for i in range(1000000):

do_something(i)Python 2 would first allocate a list with one million integers in memory, then start the loop. For large numbers, this was wasteful. For extremely large numbers, it was a program crash waiting to happen.

Python 3 turned these functions into lazy iterators. Instead of computing all values upfront and storing them in memory, they compute each value on demand — only when the loop actually needs it.

# Python 3

for i in range(1000000):

do_something(i)The same code in Python 3 uses a tiny, constant amount of memory regardless of the range size. The values are generated one at a time, used, and discarded. No giant list sitting in RAM waiting to be iterated.

The same principle applies to zip(), map(), filter(), and dictionary view methods. They return lightweight iterator objects that produce values lazily. If you genuinely need a full list, you can always call list() explicitly:

my_list = list(range(1000000)) # Explicit — you asked for itThis change had a compounding effect on Python’s suitability for data science and big data processing. When you’re working with datasets that don’t fit comfortably in memory, lazy evaluation isn’t just a nice-to-have — it’s what makes the program work at all.

| Function | Python 2 Returns | Python 3 Returns |

|---|---|---|

range(n) | Full list in memory | Lazy range object |

zip(a, b) | Full list of tuples | Lazy zip iterator |

map(f, a) | Full list | Lazy map iterator |

dict.keys() | List of keys | Dictionary view |

dict.values() | List of values | Dictionary view |

Why It Took So Long (And Why It Was Still Worth It)

Python 3 was released in December 2008. Python 2 officially reached end-of-life on January 1, 2020 — more than eleven years later. The migration was, by any measure, one of the longest and most turbulent version transitions in programming history. Major libraries like NumPy, Django, and SQLAlchemy had to be fully rewritten. Entire companies had to audit and rewrite their internal codebases. Some never did, and simply stayed on Python 2 until it became a security liability.

Was it worth it? The numbers suggest yes. Python has consistently ranked as one of the world’s most popular programming languages throughout the 2020s, powering everything from machine learning pipelines to web backends to scientific research. The clean Unicode handling, the consistent arithmetic, the memory-efficient iterators — these aren’t just theoretical improvements. They’re the foundation that made Python the lingua franca of modern data science and AI development.

Guido van Rossum famously said that the transition was “not a revolution, but a cleanup.” In hindsight, it was both. A cleanup so thorough it felt like a revolution to everyone who had to live through it — and an improvement so fundamental that it’s now almost impossible to imagine Python without it.

Breaking things on purpose is a bold move. Breaking things correctly, with a clear vision of what you’re building toward, is something else entirely.

Share :

Stay Ahead in Tech

Join thousands of developers and tech enthusiasts. Get our top stories delivered safely to your inbox every week.

No spam. Unsubscribe at any time.