Google's TurboQuant Compresses AI Memory by 6x — With Zero Accuracy Loss

- Turker Senturk

- AI , Technology

- 27 Mar, 2026

- 6 min read

Every time you have a long conversation with an AI, your GPU is quietly sweating. It has to keep track of everything you’ve said — every token, every context — in something called the key-value (KV) cache. The longer the conversation, the bigger that cache gets. For a 70-billion parameter model serving 512 users at once, the KV cache alone can consume 512 GB of GPU memory — nearly four times the memory the model weights themselves need. That’s not a hypothetical bottleneck. That’s the bill you’re paying every month.

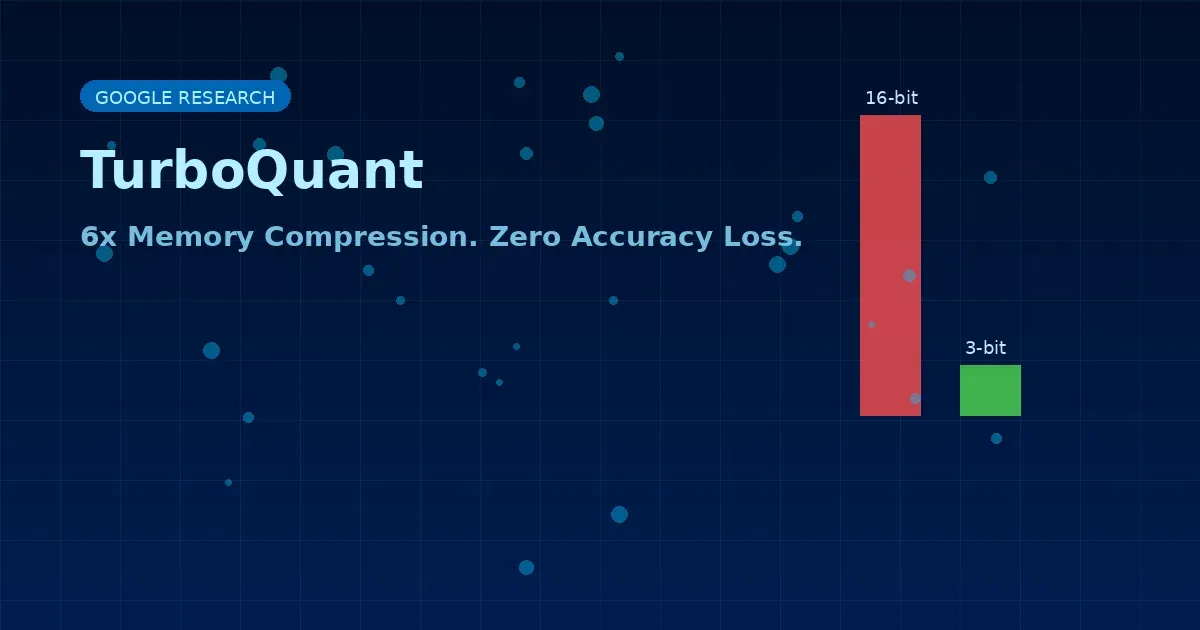

On March 25, 2026, Google Research published TurboQuant, a compression algorithm designed to attack this problem directly. The results are, to put it mildly, dramatic: at least 6x memory reduction, up to 8x speedup in attention computation on NVIDIA H100 GPUs, and — the headline — zero accuracy loss. No retraining. No fine-tuning. No calibration data required.

The paper will be formally presented at ICLR 2026 in late April, co-authored by research scientist Amir Zandieh and VP Vahab Mirrokni, along with collaborators at Google DeepMind, KAIST, and New York University.

What Is the KV Cache, and Why Does It Matter?

Think of the KV cache as the model’s working memory during a conversation. Every token the model processes gets stored as key-value pairs, and attention is computed across all of them every time a new token is generated. This is what makes LLMs contextually aware — but it’s also what makes them expensive at scale.

Traditional quantization methods (compressing numbers from 16-bit or 32-bit precision down to smaller formats) can reduce cache size, but they typically introduce small overhead: stored normalization constants, calibration artifacts, dataset-specific tuning. These extras chip away at the actual compression gains.

TurboQuant eliminates that overhead entirely through a two-stage process:

-

PolarQuant: Converts data vectors from Cartesian coordinates to polar coordinates — separating each vector into a magnitude and a set of angles. Because angular distributions are predictable, PolarQuant skips the expensive per-block normalization step that conventional quantizers require.

-

QJL (Quantized Johnson-Lindenstrauss transform): Handles the inner product estimation required for transformer attention, applying a 1-bit correction that makes estimates provably unbiased.

The result: KV cache values compressed down to just 3 bits per value — compared to the standard 16 bits — with mathematically provable distortion bounds.

The Numbers Are Real

Google benchmarked TurboQuant against the LongBench suite (question answering, code generation, summarization) and the Needle-In-A-Haystack test (finding a specific piece of information buried in up to 104,000 tokens of context). Results on Gemma, Mistral, and Llama-3.1-8B-Instruct models showed TurboQuant matching or outperforming the existing KIVI baseline across all tasks.

In attention logit computation benchmarks on H100 GPUs, 4-bit TurboQuant delivered up to 8x performance increase over 32-bit unquantized keys.

| Method | Memory Reduction | Accuracy Loss | Calibration Required |

|---|---|---|---|

| KIVI (baseline) | ~2.6x | Minimal | No |

| TurboQuant | 6x+ | Zero | No |

| NVIDIA KVTC | 20x | <1% | Yes (per-model) |

For context: jumping from KIVI’s 2.6x compression to TurboQuant’s 6x is a generational improvement for a no-calibration method. NVIDIA’s KVTC achieves higher raw compression at 20x, but requires a one-time PCA calibration step per model and has been tested on a wider range of model sizes (up to 70B parameters) — an area where TurboQuant’s published benchmarks, which top out at roughly 8B parameters, leave an open question.

Google’s DeepSeek Moment?

The comparison to DeepSeek was inevitable. When Cloudflare CEO Matthew Prince saw the announcement, he posted: “This is Google’s DeepSeek.” The reference is to the January 2025 moment when the Chinese AI lab demonstrated competitive model performance at a fraction of the typical cost — a reminder that efficiency gains can be as strategically important as raw capability improvements.

The Silicon Valley crowd went further. Within hours, “Pied Piper” was trending on tech Twitter, referencing the fictional compression startup from HBO’s Silicon Valley. The algorithm’s no-calibration, lossless-compression character does share a certain narrative DNA with the show’s premise. Google’s researchers, if they had a stronger sense of humor, might have named it accordingly.

What It Actually Means in Practice

TurboQuant has direct commercial relevance beyond language models. Google notes in the research blog that the algorithm also significantly improves vector search — the technology underlying semantic similarity lookups that powers Google Search, YouTube recommendations, and advertising targeting. On the GloVe benchmark, TurboQuant achieved superior recall ratios over competing methods without requiring large codebooks or dataset-specific tuning.

For cloud operators and anyone running large-scale LLM inference, the implications are straightforward: if TurboQuant performs as advertised at larger model scales, it reduces the memory footprint per user session significantly, which either lowers infrastructure costs or allows more concurrent users on the same hardware.

A 70B parameter model reduced from 512 GB KV cache to roughly 85 GB for 512 concurrent users is not a small difference.

There’s a catch worth noting: no official code has been released yet. Independent developers have already built working implementations in PyTorch, MLX (Apple Silicon), and C/CUDA for llama.cpp from the paper’s math alone — validation that the core claims hold up. An official release is widely expected around Q2 2026. For now, it’s research, not a production tool.

The Bigger Picture

The timing of TurboQuant’s publication — as AI infrastructure spending continues to accelerate, with Meta committing tens of billions in compute capacity and hyperscalers planning hundreds of billions in data center investment — makes it more than an academic curiosity. A technology that reduces memory requirements by 6x doesn’t reduce total spending by 6x (memory is one component of a much larger system), but it does change the cost curve for inference in meaningful ways.

Wells Fargo analyst Andrew Rocha noted that TurboQuant “directly attacks the cost curve for memory in AI systems,” adding the observation that compression algorithms have historically existed without fundamentally altering procurement volumes — a reasonable caution against over-indexing on the announcement. But he also acknowledged the concern isn’t unfounded.

The question is whether Google deploys this in its own inference stack, and how quickly the rest of the ecosystem follows.

Sources:

Share :

Stay Ahead in Tech

Join thousands of developers and tech enthusiasts. Get our top stories delivered safely to your inbox every week.

No spam. Unsubscribe at any time.