Gemma 4: Google's Most Capable Open Models Are Here — and They Run on Your Laptop

- Turker Senturk

- AI

- 03 Apr, 2026

- 9 min read

There’s a familiar tension in the open-source AI world: the models that are actually capable enough to be useful tend to require hardware that most people don’t have, while the models you can run locally are often… fine. Serviceable. Not exactly impressive.

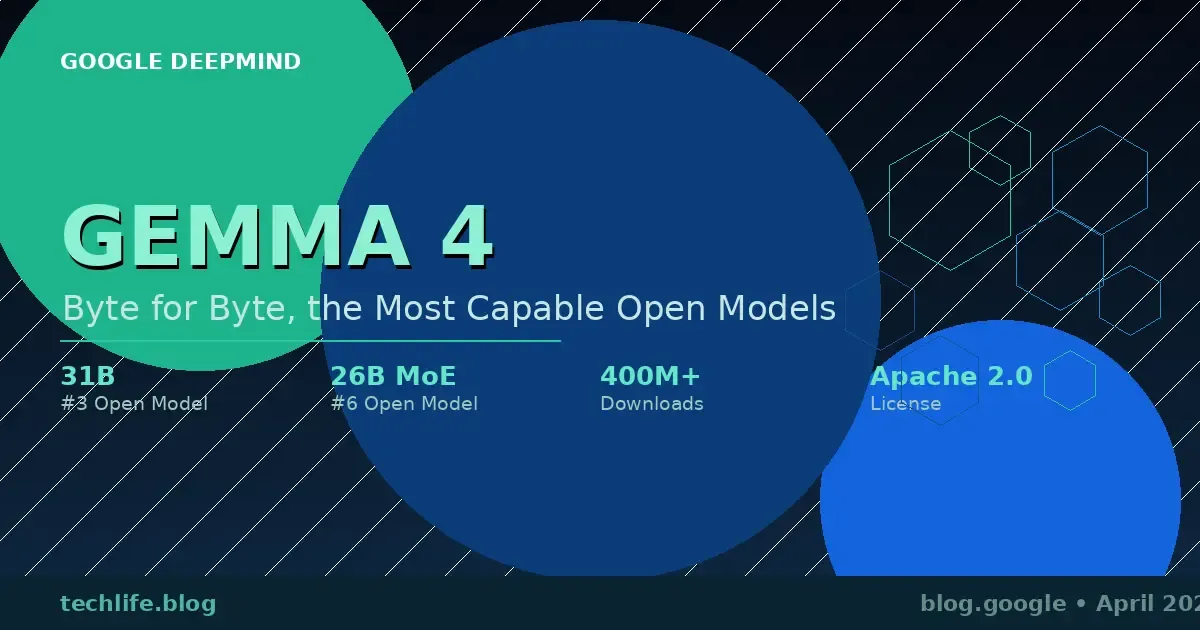

Google just took a serious swing at closing that gap. On April 2, 2026, the company announced Gemma 4 — the latest generation of its open model family, built on the same research foundation as Gemini 3, and released under an Apache 2.0 license. The headline claim: unprecedented intelligence-per-parameter. The proof: the 31B model currently sits at #3 on the Arena AI open model leaderboard, while the 26B variant holds #6 — both outcompeting models up to 20 times their size.

Four hundred million downloads across the Gemma family since its launch. Over 100,000 community-built variants. Google is clearly not treating this as a side project.

What Is Gemma 4, Exactly?

Gemma 4 is a family of four models, released simultaneously, each targeting a different slice of the hardware spectrum:

| Model | Type | Active Parameters | Best For |

|---|---|---|---|

| Gemma 4 E2B | Effective 2B | ~2B | Mobile, IoT, edge devices |

| Gemma 4 E4B | Effective 4B | ~4B | On-device multimodal tasks |

| Gemma 4 26B | Mixture of Experts | ~3.8B active | Low-latency local inference |

| Gemma 4 31B | Dense | 31B | Maximum quality, fine-tuning |

The “E” in E2B and E4B stands for effective — these models are engineered to activate only a fraction of their total parameters during inference, which keeps RAM usage and battery drain manageable on edge hardware. The 26B model takes a similar approach with a Mixture of Experts (MoE) architecture, activating just 3.8 billion parameters at runtime while drawing on a much larger pool of learned knowledge.

The 31B dense model is the one that’s going to dominate benchmark conversations. It’s also the one you’ll want for serious fine-tuning.

How Does Gemma 4 Actually Perform?

Google’s benchmarks show strong results across math, instruction-following, code generation, and multimodal tasks. But the more interesting signal comes from the Arena AI leaderboard — a crowdsourced evaluation where human raters compare model outputs directly, without knowing which model produced which response. It’s harder to game than academic benchmarks, and Gemma 4’s position near the top of the open-model rankings there is genuinely notable.

The 31B model ranked #3 among all open models globally as of April 1, 2026. For context: it fits on a single 80GB NVIDIA H100 GPU unquantized. Quantized versions run on consumer-grade GPUs. That combination — top-tier quality, accessible hardware requirements — is the whole pitch.

For developers who need raw throughput rather than maximum quality, the 26B MoE model delivers faster tokens-per-second by keeping active parameters lean. Think of it as the sports car variant: lighter, quicker off the line, optimized for responsiveness.

What Can Gemma 4 Actually Do?

Beyond raw benchmark numbers, Gemma 4 was specifically designed around capabilities that matter for real-world developer workflows:

Advanced Reasoning

Multi-step planning, logical inference, complex math — the areas where smaller models typically fall apart. Gemma 4 shows meaningful improvements here, particularly in benchmarks that require extended chains of reasoning rather than single-turn pattern matching.

Agentic Workflows

Native support for function calling, structured JSON output, and system instructions out of the box. This matters because agentic AI — models that don’t just respond but actually do things, interacting with tools, APIs, and external services — is where most of the interesting applied AI work is happening right now. Gemma 4 was built with this use case in mind from the start, not bolted on afterward.

Code Generation

High-quality offline code assistance. The pitch here is turning your local workstation into a private AI coding assistant that doesn’t send your codebase to a cloud endpoint. For anyone working with sensitive codebases, that’s not a small thing.

Vision and Audio

All four models natively process images and video, with support for variable resolutions and specific strengths in OCR and chart understanding. The two edge models (E2B and E4B) add native audio input for speech recognition and understanding — which makes them genuinely interesting for on-device voice applications.

Long Context Windows

The edge models support 128K token context windows. The larger models go up to 256K. Passing an entire repository or a lengthy technical document in a single prompt is no longer a workaround — it’s a supported use case.

Multilingual Support

Natively trained on 140+ languages. Gemma 4 isn’t a primarily English model with multilingual fine-tuning layered on top; multilingual capability was baked in from the training stage.

Running on Everything: The Hardware Story

One of the more technically interesting aspects of Gemma 4 is how deliberately Google has matched model architectures to hardware tiers.

The E2B and E4B models were developed in close collaboration with Google’s Pixel team, Qualcomm Technologies, and MediaTek. They run completely offline on Android devices, Raspberry Pi hardware, and NVIDIA Jetson Orin Nano boards — with near-zero latency. Android developers can already prototype agentic flows using these models through the AICore Developer Preview, with a path toward forward compatibility with Gemini Nano 4.

The 26B and 31B models, while larger, are sized to fit on hardware that’s genuinely within reach. A single 80GB H100 handles the unquantized 31B. Quantized versions run on gaming GPUs. That’s not “accessible if you’re a well-funded research lab” — it’s accessible if you have a reasonably modern workstation.

For cloud deployment, Google Cloud support is available through Vertex AI, Cloud Run, and GKE, with TPU-accelerated serving options for workloads that need massive scale.

The Apache 2.0 License: Why It Matters

Previous Gemma generations shipped under a custom license that, while permissive in many ways, wasn’t technically open source and came with certain restrictions that gave some developers pause. Google listened to that feedback.

Gemma 4 is released under the Apache 2.0 license — one of the most permissive and widely recognized open-source licenses in existence. It allows commercial use without restrictions, modification and redistribution, and gives organizations complete control over their data, infrastructure, and model deployments. There’s no requirement to share modifications, no royalty obligations, no use-case carve-outs.

For enterprises evaluating AI infrastructure with data sovereignty requirements, or for developers who want to build commercially viable products without negotiating license agreements, this is a significant change. Apache 2.0 is the kind of license that legal teams already know how to handle.

Real-World Applications Already in the Wild

Google didn’t wait for the launch to showcase what Gemma-class models can do in practice. Two examples stand out:

BgGPT — developed by INSAIT, this is a pioneering Bulgarian-language model built on the Gemma architecture. It’s a concrete demonstration of what fine-tuning can accomplish: taking a general-purpose base model and producing a specialized, high-quality tool for a specific language community that might otherwise be underserved by mainstream AI development.

Cell2Sentence-Scale — a collaboration with Yale University that used Gemma models to explore new pathways for cancer therapy discovery. This is the kind of application that makes the “open” in open models meaningful: researchers at academic institutions can work with these models directly, fine-tune them on domain-specific data, and potentially produce results that wouldn’t be possible with proprietary API access.

The Ecosystem: Where Can You Get It?

Google has made Gemma 4 available across essentially every major platform a developer might want to use:

Direct access: Google AI Studio (31B and 26B MoE), Google AI Edge Gallery (E4B and E2B), Android Studio Agent Mode, ML Kit GenAI Prompt API.

Model hubs: Hugging Face, Kaggle, Ollama.

Inference frameworks: vLLM, llama.cpp, MLX, LM Studio, SGLang, Unsloth, Baseten, Docker.

Training and fine-tuning: Google Colab, Vertex AI, Keras, MaxText, Tunix, and consumer GPU setups.

Hardware optimization: Out-of-the-box support for NVIDIA infrastructure from Jetson Orin Nano to Blackwell GPUs, AMD GPUs via ROCm, and Google’s Trillium and Ironwood TPUs.

Day-one support across Hugging Face Transformers, TRL, Transformers.js, Candle, NVIDIA NIM, and NeMo means you can likely drop Gemma 4 into your existing workflow without major tooling changes.

What Does This Mean for the Open Model Landscape?

The open-source AI model space has been moving fast, with Meta’s Llama series, Mistral, and various other releases competing for developer adoption. Gemma 4 enters this field with a few distinct advantages: it’s built on Gemini 3 research infrastructure, it’s genuinely optimized for edge hardware in a way that most competitors aren’t, and the Apache 2.0 licensing removes a meaningful barrier that the previous Gemma license created.

The 400 million download figure across the Gemma family isn’t just a marketing number — it reflects a real developer community that has already built tooling, fine-tuned variants, and integrated these models into production systems. Gemma 4 inherits that ecosystem while substantially raising the capability ceiling.

The Gemmaverse — Google’s term for the community-built ecosystem around these models — now includes over 100,000 variants. That’s a lot of institutional knowledge about what these architectures can and can’t do, and Gemma 4 gives that community significantly more to work with.

The Techlife Verdict

Gemma 4 is the most credible challenge to the idea that “open” and “capable” are in tension for large language models. A top-three open model on Arena AI that runs on a single H100 — or, quantized, on your gaming GPU — is a meaningful achievement. The Apache 2.0 license removes the last major friction point that kept some developers and organizations from fully committing to the Gemma ecosystem.

The edge models are the sleeper story here. Multimodal, audio-capable, 128K context, running offline on Android devices and Raspberry Pi hardware — if the performance holds up in real-world applications, that’s a genuinely new category of on-device capability.

Whether Gemma 4 pulls significant developer share away from Llama, Mistral, or other open alternatives will depend on benchmark results under real-world conditions, fine-tuning ease, and how well the edge models perform outside controlled tests. But on paper, this is the strongest Gemma release yet — and arguably the most competitive open model family Google has shipped.

Source: https://blog.google/innovation-and-ai/technology/developers-tools/gemma-4/

Share :

Stay Ahead in Tech

Join thousands of developers and tech enthusiasts. Get our top stories delivered safely to your inbox every week.

No spam. Unsubscribe at any time.