Snowflake's Arctic Long Sequence Training: How to Train LLMs on 15 Million Tokens Without Selling a Kidney

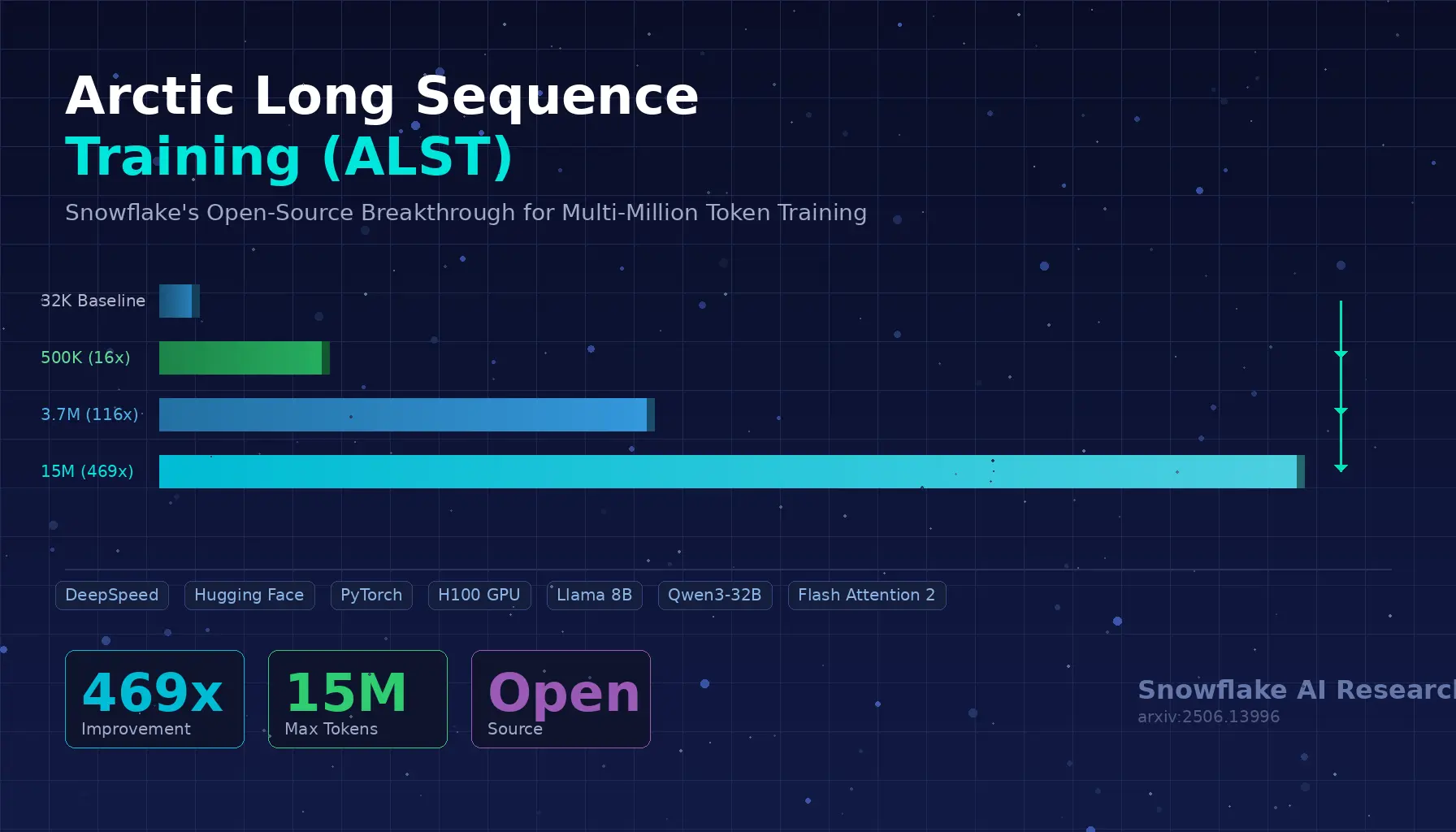

Let’s be honest: training a large language model on long sequences has been the AI equivalent of trying to fit a king-size mattress through a studio apartment door. The mattress is your data, the door is your GPU memory, and you’re standing there sweating, wondering why nobody designed this better. Snowflake AI Research just handed you a bigger door — or, more accurately, a set of clever tricks that make your mattress foldable. Meet Arctic Long Sequence Training (ALST), the open-source framework that takes you from a pathetic 32K token ceiling to a jaw-dropping 15 million tokens on just four nodes of NVIDIA H100 GPUs. That’s a 469x improvement, and yes, it works with your existing Hugging Face models out of the box. ...